Projects

A diverse set of projects targeting explainable medical AI

To reach the KEMAI research goals, we have put together a set of diverse PhD projects in the areas of computer science, medicine, ethics and philosophy. The following outlines are examples of such projects. We expect that KEMAI doctoral researchers work on one of these projects, or an alternative project suggested by themselves, which fits under the KEMAI umbrella.

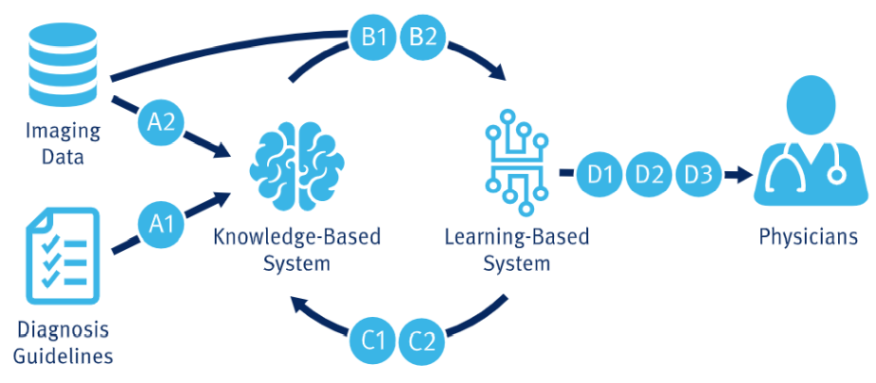

Below is a list of our project proposals, organized by research topic area. These areas of focus include Data Exploitation, which involves harvesting medical guidelines and investigating contrastive pre-training; Knowledge Infusion, which enriches learning models with medical guidelines; Knowledge Extraction, which derives medical guidelines from trained models; and Model Explanation, which communicates the decision process of trained models. Each project is designed to advance our understanding and application of these key areas, contributing to the overall improvement of medical diagnostics and treatments through cutting-edge research and technology.

The following figure shows an overview of how the described projects contribute towards the overall KEMAI mission, which is detailed on the KEMAI mission page.

Data Exploitation

-

A1 – Prototype-based Concept models from sparse annotated medical images

Project Leads: Jun.-Prof. Götz (Medicine), Prof. Glimm (Computer Science), Dr. Vernikouskaya (Medicine)

Concept-based AI systems are highly beneficial for answering radiological questions, as they combine high performance with inherent explainability. However, obtaining the necessary training data can be challenging. This project aims to address this limitation by investigating novel approaches to automatically extracting the necessary information from existing data sources during the training process. To achieve this, the project will leverage the potential of pre-training approaches, domain adaptation, and automated text extraction using large language models. The resulting solutions will be evaluated using medical tasks, comparing our existing concept-based approach with potential new approaches, both with and without concepts. -

A2 – Concept-Based Explainable Computer Vision for Medical Diagnosis

Project Leads: Prof. Ropinski (Computer Science), Prof. Hufendiek (Philosophy), Dr. Lisson (Medicine)

This project develops explainable computer vision methods for medical diagnosis that link model predictions to clinically meaningful visual concepts and decision criteria. It investigates how attention maps, concept-based explanations, and example-based reasoning can be combined to make detection, classification, and segmentation results more transparent. The project evaluates these methods with medical imaging tasks to assess whether they improve interpretability, support error analysis, and increase clinicians’ trust and acceptance of AI-assisted decisions.

Knowledge Infusion

-

B1 – Neuro-Symbolic Reasoning for Medical Imaging

Project Leads: Prof. Braun (Computer Science), Prof. A. Beer (Medicine)

This project investigates reasoning-based approaches to medical image interpretation, using cancer staging from PET/CT as primary application domain. It addresses two complementary aspects. First, modularization: rather than learning the full mapping from images to clinical decisions end-to-end, we decompose the problem into individually learnable cause-effect pairs and compose them through explicit reasoning at inference time, exploring this as a strategy for generalization when the combinatorial space of possible cases far exceeds available training data. Second, explanation: we investigate how structured reasoning processes can generate not just predictions but candidate hypotheses with supporting and opposing evidence, serving as a reasoning scaffold that makes the system’s logic transparent and useful to clinicians. The project sits at the intersection of neuro-symbolic AI, medical imaging, and clinical decision support, with the broader aim of understanding where the boundary between learning and reasoning should lie in medical AI systems. -

B2 – Trusted and interpretable patterns for mixed-type high-dimensional diagnostics

Project Leads: Prof. Kestler (Medicine), Prof. M. Beer (Medicine)

High-dimensional diagnostics characterise patients using imaging, molecular profiles, and heterogeneous clinical data such as routine laboratory values and questionnaire responses. This project investigates principled approaches to learning and uncertainty quantification in this setting, addressing two complementary aspects. Heterogeneity: rather than treating mixed-type data as a preprocessing problem, we address the challenge of learning directly from inputs of differing scales and types, exploring how specialized feature encodings, distance measures, and weighting schemes can be integrated into a coherent learning framework suited to the high-dimensional, small-sample regime characteristic of clinical diagnostics. Uncertainty: we investigate how, for example, conformal prediction can provide valid confidence bounds for patterns detected in mixed-type data, defining conformity scores that capture both categorical and numerical variables, with the aim of generating not just predictions but trustworthy, interpretable outputs for clinical use. The project is at the intersection of machine learning, uncertainty quantification, and clinical decision support, with the broader aim of making AI-driven diagnostics reliable and transparent in settings where data are heterogeneous, costly to acquire, and limited in quantity.

Knowledge Extraction

-

C1 – Enriching Ontological Knowledge Bases through Abduction

Project Leads: Prof. Glimm (Computer Science), Prof. Kestler (Medicine)

This project uses abductive reasoning based on ontological knowledge to explain ML system outcomes. It focuses on developing practical abduction algorithms for consequence-based procedures, extending the deductive reasoner ELK. The research aims to explicate and exploit hidden knowledge in learned systems, benefiting related projects by providing semantic domain knowledge. -

C2 – Investigating Explainability of Computational Vision Mechanisms for Medical Diagnosis

Project Leads: Prof. Neumann (Computer Science), Prof. Ropinski (Computer Science), Jun.-Prof. Götz (Medicine)

The lack of transparency and explainability of computational vision methods reduces the trust and verifiability of decisions made by a vision AI system. This is of utmost importance for applications in the medical domain. The project focuses on explainability and will investigate the predictions of complex models, such as deep (recurrent) neural networks with their non-linear transformation layers and complex computational nodes. The project aims to construct explanations, e.g., by combining the analysis of model responses to local changes and quantifying hidden units growing and evolving over training iterations to act as semantic detectors. Such methods are evaluated using medical imaging tasks to assess whether they improve interpretability, support error analysis, and increase clinicians’ trust and acceptance of AI-assisted decisions.

Model Explanation

-

D1 – Dual Use Research of Concern with AI-based Medical Diagnoses

Project Leads: Prof. Steger (Ethics), Prof. Ropinski (Computer Science)

This project addresses the ethical analysis of research with the use of AI and expert systems, which belongs to Dual Use Research of Concern (DURC), as it can be misused with intent to harm human dignity, health, freedom, property or peaceful coexistence. It has become particularly important to not only consider potential military uses and misuses of research, but also examine possible vulnerabilities of systems based on large patient databases to discrimination, extortion through ransomware, theft of sensible data and computer attacks. For example, data of patients with certain diseases can be misused by insurance companies or employers to discriminate them. Therefore, the issue of security-relevant research and DURCs has to be discussed with AI-based Medical Diagnoses in an ethical perspective. Within this project, the research will concentrate on the evaluation of AI systems with regard to protection of individuals and dual use of this technology. A particular focus will lie on the identification of vulnerabilities as well as potential threats related to the use of such systems, e.g., through theft of personal data used in research and clinical applications or through dual use of the developed systems, e.g., for military or criminal purposes. Moreover, it will be examined how such risks can be minimized, e.g., through restriction of personal data gathered from patients or through built-in protection mechanisms and guidelines on how ethically secured systems can be developed. -

D2 – Explainability, Responsibility, and Clinical Judgment in Medical AI

Project Leads: Prof. Hufendiek (Philosophy), Prof. Glimm (Computer Science), Dr. Hauke Berendt (Philosophy)

This primarily philosophical project focuses on medical scenarios in which AI systems generate diagnostic or therapeutic recommendations and thereby become involved in potentially high-stakes decisions. The central question is under what conditions such recommendations can be explained in a way that enables responsible clinical judgment, understood not merely as technical transparency but as a practice of justification sensitive to context, stakeholders, and risk.

Medical PhD Projects (10 months, MD)

The outlined PhD projects are complemented by medical PhD projects, which complement the technical and ethical projects, and which are targeted towards medical researchers. The medical PhD projects can be supervised by one of the KEMAI medical partners. In this area, we foresee the following projects:

M1 – Guideline Analysis

This project focuses on systematically analyzing medical guidelines for their integration into AI systems aimed at improving medical diagnostics. The doctoral researcher will assess guidelines, extract relevant rules, and evaluate their impact on AI models with medical practitioners.

M2 – Explanation Ranking

This project aims to evaluate and rank explanation techniques for AI-supported medical diagnoses. The doctoral researcher will develop a taxonomy of explanations and conduct evaluations with medical practitioners to determine their effectiveness.

M3 – Explanation Requirements for COVID-19 CT Imaging

This project focuses on the requirements for explainable AI in COVID-19 CT imaging. The doctoral researcher will analyze the diagnostic process, conduct expert interviews, and propose guidelines for integrating explainable AI.

M4 – Explanation Requirements for Bronchial Carcinoma PET/CT Imaging

Similar to M3, this project addresses the requirements for explainable AI in bronchial carcinoma PET/CT imaging. The research will follow a similar protocol but consider the dual-modality nature of the diagnosis.

M5 – Explanation Requirements for Echinococcosis PET/CT Imaging

Complementing M3 and M4, this project analyzes the requirements for explainable AI in echinococcosis PET/CT imaging. The research will follow a similar protocol, accounting for the rarity and sparse data of the disease.

M6 – LLM Explanation Evaluation

This project investigates the use of large language models (LLMs) to support explainable AI in medical diagnosis. The doctoral researcher will experiment with LLMs, analyze their outputs with medical experts, and identify requirements for future research to enhance their utility.

KEMAI - Research Training Group on Medical XAI

KEMAI - Research Training Group on Medical XAI